Picture the scene.

Monday morning. Your AI agent opens an HR ticket:

“Time-off request: 3 days. Reason: need to recalibrate my inferences.”

Another one asking for a raise.

Impossible? Probably. Agents most likely won’t make those kinds of requests. So why think about HR for agents at all?

You have probably deployed AI agents in your organisation without:

- An inventory of who does what

- A structured onboarding process

- Access rights that are defined and reviewed

- A regular performance review

- An offboarding process when the agent is no longer useful

In other words: without an HR mindset, you’re managing your AI agents like interns left alone in the office on day one — with the keys to the building.

Building an HR system fit for your “digital workforce”

Matt Einig (Rencore) lays out the foundations for building an HR system adapted to your “digital workforce”:

| Human management | AI agent management |

|---|---|

| Job description | Agent scope (what, why, limits) |

| Onboarding | Controlled rollout + trial period |

| IT access rights | API permissions + data access |

| Performance review | Monitoring: cost, usage, errors, drift |

| Role change | Update the scope or the model |

| Offboarding | Deactivation + access clean-up |

The real issue is access.

Every AI agent you deploy potentially has access to:

- Your supplier data (prices, volumes, terms)

- Your contracts (clauses, commitments, penalties)

- Your internal exchanges (emails, Slack, Teams)

- Your financial tools (ERP, payment systems)

And unlike a human, an AI agent:

- Never sleeps

- Can be duplicated in 3 clicks

- Doesn’t understand confidentiality by intuition

- Can be hijacked if misconfigured (see the point above)

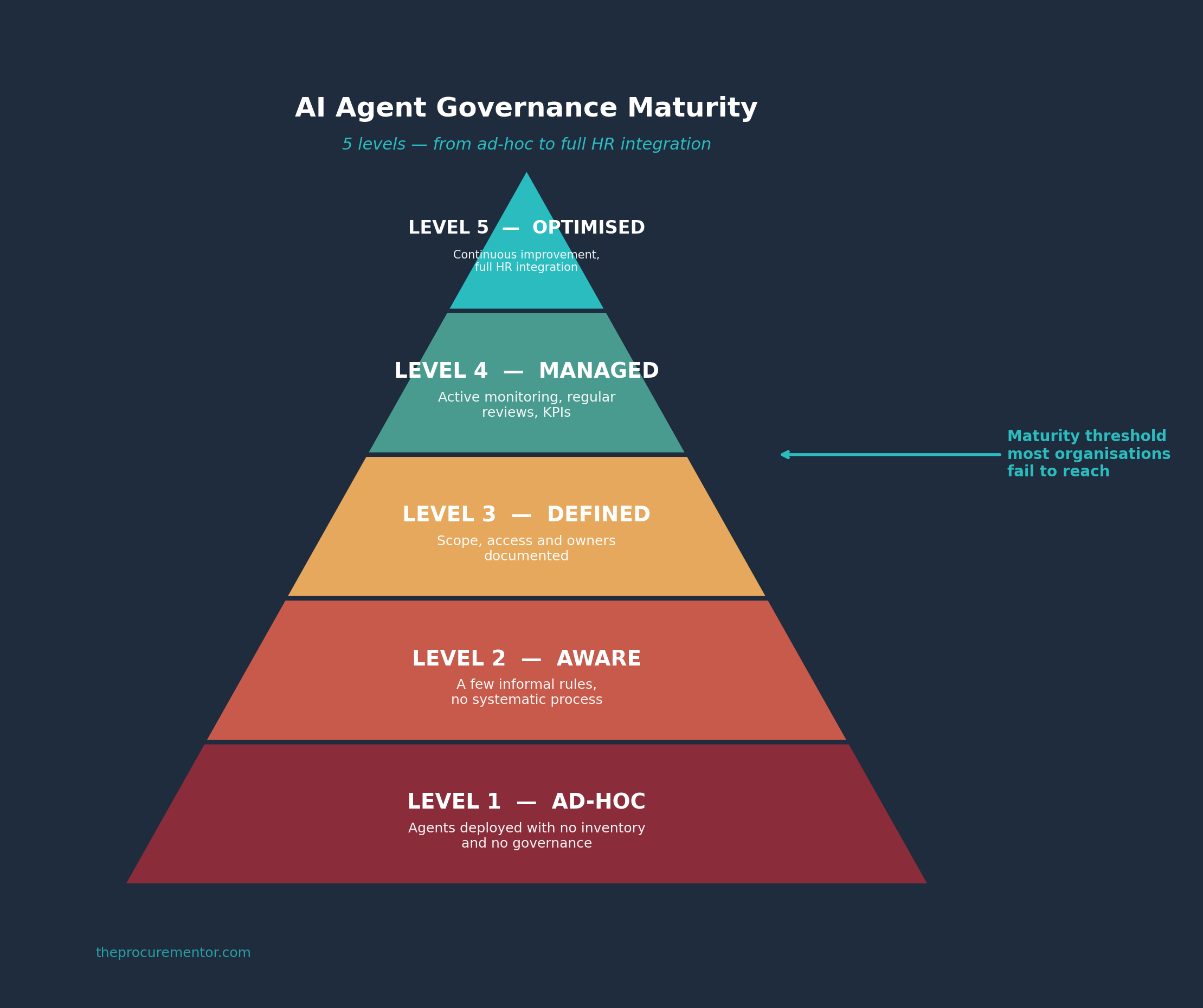

What mature companies do

Gartner estimates that by 2027, 80% of digital workplace tools will integrate generative AI. But most organisations sit below level 3 of governance maturity.

Companies that manage their AI agents well apply 4 principles:

1. Centralised registry

An inventory of every active agent: who built it, what its scope is, which data it accesses, who owns it. Exactly like an HR registry.

2. Principle of least privilege

An agent only accesses the data strictly necessary for its mission. No “admin by default”. No “we’ll sort it out later”.

3. Quarterly review

Like a performance appraisal: is the agent still useful? Is its cost justified? Are its access rights still relevant? Has it drifted from its original scope?

4. Systematic offboarding

When an agent is no longer used, you deactivate it AND revoke its access. Otherwise you end up with “ghost agents” — the digital equivalent of AD accounts of former employees that were never disabled.

For procurement leaders, it’s even more critical.

Our data is sensitive by nature: prices, margins, negotiation strategies, supplier evaluations. A poorly governed agent that exposes a price grid or a supplier scorecard is a competitive advantage going up in smoke.

A bad hire is expensive.

A bad AI agent is expensive too — but 200 times per second.

The question isn’t “should we deploy AI agents?”

It’s “do you have the governance to manage them?”

Link in the comments to the long-form article (fr/en) including Matt Einig’s piece on the “HR System for Digital Workforce” approach.

#AI #Governance #Procurement #DigitalWorkforce #AIAgents